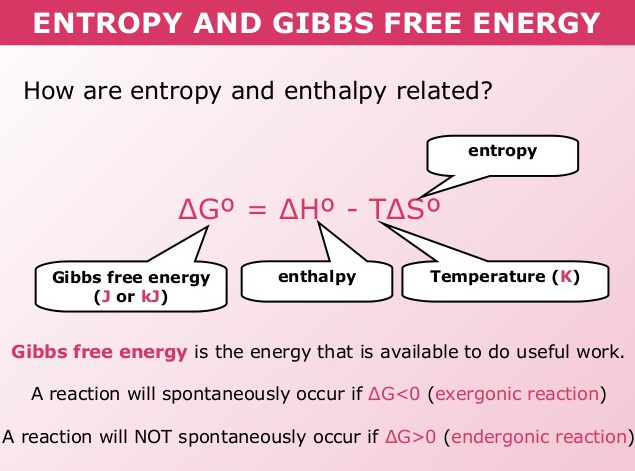

You do this by counting up the moles of the products versus the moles of the reactants. The third way is to look at the relative entropy between the reactants and the products of a chemical equation. In other words, solid has the lowest entropy and gas had the highest entropy. Entropy increases as we go from solid -> liquid -> aqueous -> gas. The second way is to look at the different states of matter between the products and reactants of a chemical equation. This will later be important to do in the next section spontaneous. The first way is to think about each molecu le as ha ving a specific entropy and looking up the value (number) associated that molecule on an entropy table.

How can entropy be asked about or displayed in chemistry? The energy is expended by the person when they lift the clothes up from the floor or bed and fold them into the closet or dresser. From Monday to Friday the entropy of the bedroom increases (becomes more messy) but over the weekend what happens to the bedroom? The bedroom gets cleaned by a person putting energy into it. We can also think about the bedroom analogy that we were discussing about before. The energy from the cooking process fuses them together into a larger mass and decreases the entropy of the egg. Just like taking multiple eggs and scrabbling them together and then cooking them. This allows you to make a bigger molecule or a bigger anything. As you put energy into anything (including a molecule) you can decrease its entropy. If you want to know more about these arguments then I sugge st you use this link for an expanded explanation after you read the rest of this section. This is one of the most fundamen tal and misunderstood concepts in science today and is one of the arguments that come up between religious believers and non-religious believers. What is the relationship between entropy and energy? The symbol for the change in entropy is Δ S. Entropy causes larger molecules to break down into smaller molecules over time. We can also think about how entropy affects very small things like molecules. These are all examples of how entropy affects our daily lives. Paint starts to become flaky and break off over time. So as time passes a bedroom moves from low entropy (orderly) to high entropy (disorderly).

By Friday cloths are on your floor, on your bed, and all over (very disorderly). On Monday all your clothes are neatly folded in your dresser or hanging in your closet (very orderly). The easiest example to think of how entropy works is a measure of disorder of your bedroom from Monday to Friday. Something with less entropy (more orderly) has a lower value (number) associated with it. Something with more entropy (more disorderly) has a higher value (number) associated with it.

As time goes on the entropy of all things will increase. Send us feedback about these examples.It is one of the most confusing scientific t erms, but it is also one of the concepts that people are most familiar with. These examples are programmatically compiled from various online sources to illustrate current usage of the word 'entropy.' Any opinions expressed in the examples do not represent those of Merriam-Webster or its editors. 2022 And it’s based off of the level lamp entropy from Cloudflare. 2023 The entropy of the universe means that it gets increasingly disordered over long spans of time. 2023 The mere word conjures images of Southern Gothic fervor people with arms raised to the sky under big white tents, singing, sweating, fanning themselves, and being moved by the entropy of their fellow worshipers. Anil Ananthaswamy, Scientific American, 1 Mar. 2023 The Ryu-Takayanagi result showed that the area of the extremal surface of a black hole in the AdS is related to the entanglement entropy of the quantum system in the CFT. Sebastian Smee, Washington Post, 25 Mar. 2020 Perhaps his primary knack was for contriving a tension between immaculate optics and human entropy. 2023 The second law of thermodynamics says that entropy-commonly thought of as disorder-inexorably rises over time then the Big Bang must have been a highly ordered, very special state. 2017 And here's where things get even weirder: the AI, called Entropica, is based on equations derived from the second law of thermodynamics, suggesting a link between entropy and intelligence. Recent Examples on the Web A couple of years later, Sachdev and colleagues Antoine Georges and Olivier Parcollet were surprised to find that their model suggested strange metals had a fantastically high entropy - a measure of the number of possible ways particles can arrange themselves.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed